Communications and Signal Processing Seminar

Surprises in High-Dimensional (Overparameterized) Linear Classification

This event is free and open to the publicAdd to Google Calendar

ABSTRACT: Seemingly counter-intuitive phenomena in deep neural networks have prompted a recent re-investigation of classical machine learning methods, like linear models and kernel methods. Of particular focus is sufficiently high-dimensional setups in which interpolation of training data is possible. In this talk, we will first briefly review recent works showing that zero regularization, or fitting of noise, need not be harmful in regression tasks. Then, we will use this insight to uncover two new surprises for high-dimensional linear classification:

- least-2-norm interpolation can classify consistently even when the corresponding regression task fails, and

- the support-vector-machine and least-2-norm interpolation solutions exactly coincide in sufficiently high-dimensional models.

These findings taken together imply that the (linear/kernel) SVM can generalize well in settings beyond those predicted by training-data-dependent complexity measures. Time permitting, we will also discuss preliminary implications of these results for adversarial robustness, and the influence of the choice of training loss function in the overparameterized regime.

This is joint work with Misha Belkin, Daniel Hsu, Adhyyan Narang, Anant Sahai, Vignesh Subramanian, Christos Thrampoulidis, Ke Wang and Ji Xu.

Links to papers:

- “Harmless interpolation of noisy data in regression” (will be briefly reviewed), IEEE Journal on Selected Areas in Information Theory 2020.

- “Classification-vs-regression in overparameterized regimes: Does the loss function matter?” (will be discussed in detail), Journal of Machine Learning Research 2021.

- “On the proliferation of support vectors in high dimensions” (will be discussed in detail), AISTATS 2021.

- “Benign overfitting in multiclass classification: All roads lead to interpolation” (will be discussed briefly or in detail depending on time), conference version at NeurIPS 2021, longer version submitted to IEEE Transactions on Information Theory.

- “A farewell to the bias-variance tradeoff? An overview of the theory of overparameterized learning” (will be mentioned), preprint of survey paper.

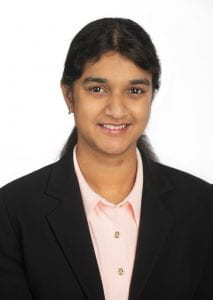

BIO: Vidya Muthukumar is an Assistant Professor in the Schools of Electrical and Computer Engineering and Industrial and Systems Engineering at Georgia Institute of Technology. Her broad interests are in game theory, online and statistical learning. She is particularly interested in designing learning algorithms that provably adapt in strategic environments, fundamental properties of overparameterized models, and fairness, accountability, and transparency in machine learning.

Vidya received the Ph.D. degree in Electrical Engineering and Computer Sciences from University of California, Berkeley. She is the recipient of a Simons-Berkeley Research Fellowship (for the Fall 2020 program on “Theory of Reinforcement Learning”), IBM Science for Social Good Fellowship and a Georgia Tech Class of 1969 Teaching Fellowship for the academic year 2021-2022.

Join Zoom Meeting https://umich.zoom.us/j/91771072666

Meeting ID: 917 7107 2666

Passcode: XXXXXX (Will be sent via e-mail to attendees)

Zoom Passcode information is also available upon request to Katherine Godwin ([email protected]).

MENU

MENU